Introduction

Tiders is an open-source framework that simplifies getting data out of blockchains and into your favorite tools. Whether you are building a DeFi dashboard, tracking NFT transfers, or running complex analytics, Tiders handles the heavy lifting of fetching, cleaning, transforming and storing blockchain data.

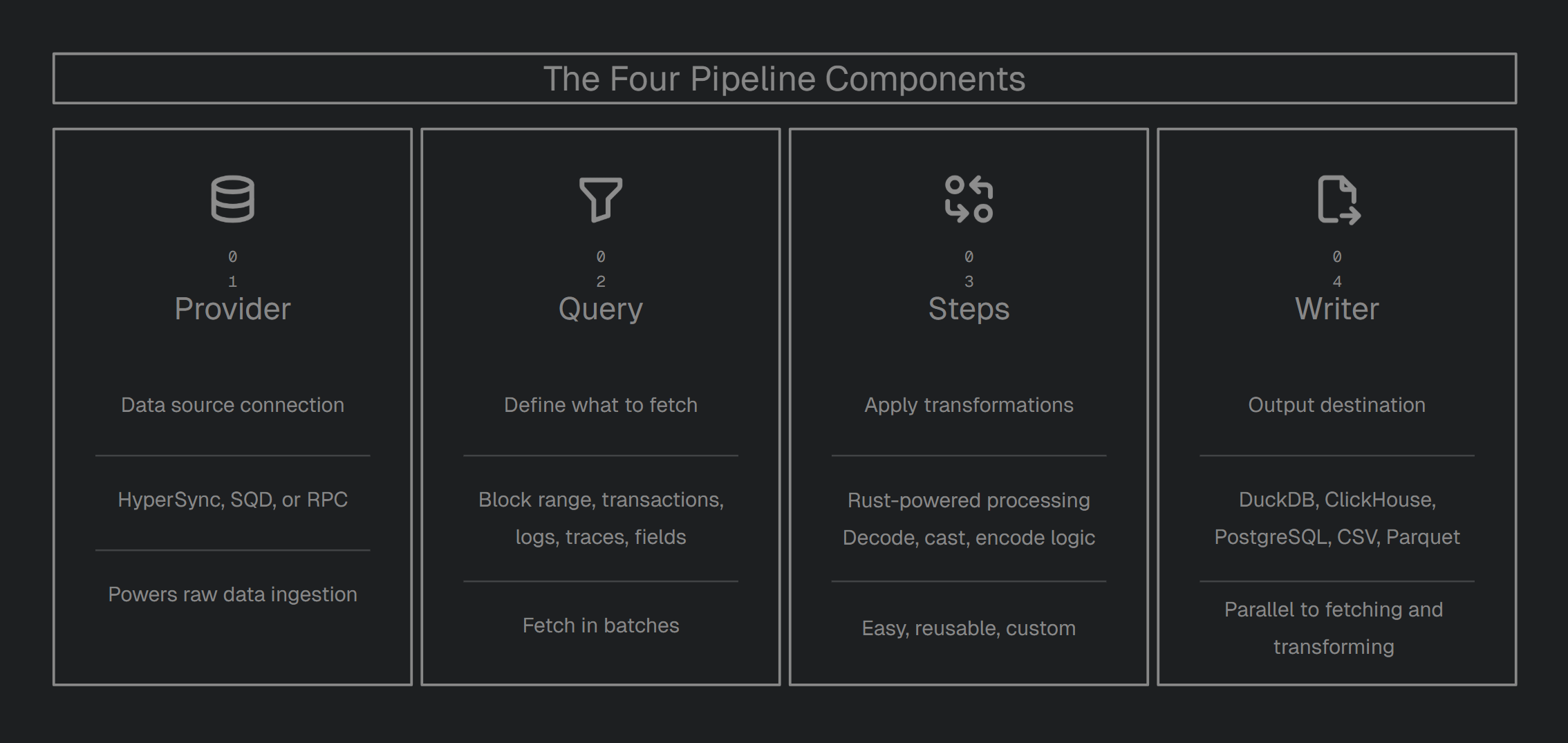

Tiders is modular. A Tiders pipeline is built from four components:

| Component | Description |

|---|---|

Provider | Data source (HyperSync, SQD, or RPC) |

Query | What data to fetch (block range, transaction, logs, filters, field selection) |

Steps | Transformations to apply (decode, cast, encode, custom) |

Writer | Output destination |

Why Tiders?

Most indexers lock you into a specific platform or database. Tiders is built to be modular, meaning you can swap parts in and out without breaking your setup:

- Swap Providers: Don’t like your current data source? Switch between HyperSync, SQD, or a standard RPC node by changing one line of code.

- Plug-and-Play data transformations: Need to decode smart contract events or change data types? Use our built-in Rust-powered steps or write your own custom logic.

- Write Anywhere: Send your data to a local DuckDB file for prototyping, or a production-grade ClickHouse or PostgreSQL instance when you’re ready to scale.

- Modular Reusable Pipelines: Protocols often reuse the same data structures. You don’t need write modules from scratch every time. Since Tiders pipelines are regular Python objects, you can build functions around them, reuse across pipelines, or set input parameters to customize as needed.

Two ways to use Tiders

| Mode | How | When to use |

|---|---|---|

| Python SDK | Write a Python script, import tiders | Full control, custom logic, complex pipelines |

| CLI (No-Code) | Write a YAML config, run tiders start | Quick setup, no Python required, standard pipelines |

Both modes share the same pipeline engine.

You can also use tiders codegen to generate a Python script from a YAML config — a quick way to move from no-code to full Python control.

Key Features

- Continuous Ingestion: Keep your datasets live and fresh. Tiders can poll the chain head to ensure your data is always up to date.

- Switch Providers: Move between HyperSync, SQD, or standard RPC nodes with a single config change.

- No Vendor Lock-in: Use the best data providers in the industry without being tied to their specific platforms or database formats.

- Custom Logic: Easily extend and customize your pipeline code in Python for complete flexibility.

- Advanced Analytics: Seamlessly works with industry-standard tools like Polars, Pandas, DataFusion and PyArrow as the data is fetched.

- Multiple Outputs: Send the same data to a local file and a production database simultaneously.

- Rust-Powered Speed: Core tasks like decoding and transforming data are handled in Rust, giving you massive performance without needing to learn a low-level language.

- Parallel Execution: Tiders doesn’t wait around. While it’s writing the last batch of data to your database, it’s already fetching and processing the next one in the background.

Data Providers

Connect to the best data sources in the industry without vendor lock-in. Tiders decouples the provider from the destination, giving you a consistent way to fetch data.

Tiders can support new providers. If your project has custom APIs to fetch blockchain data, especially ones that support server-side filtering, you can create a client for it, similar to the Tiders RPC client. Get in touch with us.

Transformations

Leverage the tools you already know. Tiders automatically convert data batch-by-batch into your engine’s native format, allowing for seamless, custom transformations on every incoming increment immediately before it is written.

| Engine | Data format in your function | Best for |

|---|---|---|

| Polars | Dict[str, pl.DataFrame] | Fast columnar operations, expressive API |

| Pandas | Dict[str, pd.DataFrame] | Familiar API, complex row-level operations |

| DataFusion | Dict[str, datafusion.DataFrame] | SQL-based transformations, lazy evaluation |

| PyArrow | Dict[str, pa.Table] | Zero-copy, direct Arrow manipulation |

Supported Output Formats

Whether local or a production-grade data lake, Tiders handles the schema mapping and batch-loading to your destination of choice.

| Destination | Type | Description |

|---|---|---|

| DuckDB | Database | Embedded analytical database, great for local exploration and prototyping |

| ClickHouse | Database | Column-oriented database optimized for real-time analytical queries |

| PostgreSQL | Database | General-purpose relational database with broad ecosystem support |

| Apache Iceberg | Table Format | Open table format for large-scale analytics on data lakes |

| Delta Lake | Table Format | Storage layer with ACID transactions for data lakes |

| Parquet | File | Columnar file format, efficient for analytical workloads |

| CSV | File | Plain-text format, widely compatible and easy to inspect |

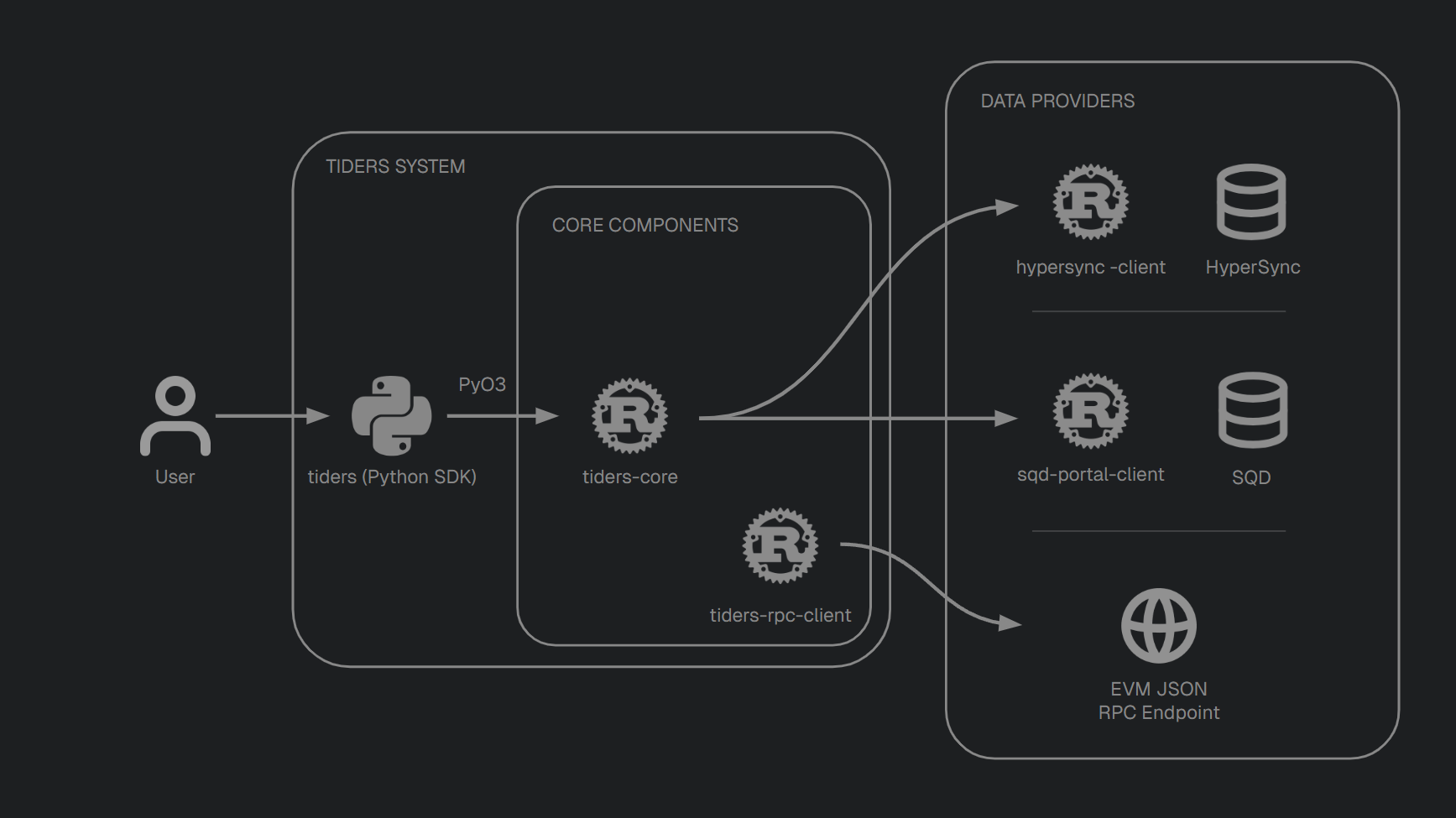

Architecture

Tiders is composed of some repositories. 3 owned ones.

| Repository | Language | Role |

|---|---|---|

| tiders | Python | User-facing SDK for building pipelines |

| tiders-core | Rust | Core libraries for ingestion, decoding, casting, and schema |

| tiders-rpc-client | Rust | RPC client for fetching data from any standard EVM JSON-RPC endpoint |

API Reference

Auto-generated Rust API documentation is available at:

Installation

CLI (No-Code Mode)

To use tiders start with a YAML config, install the cli:

pip install "tiders"

This adds everything needed to run pipelines from a YAML file.

Combine with writer extras as needed:

pip install "tiders[duckdb]"

pip install "tiders[delta_lake]"

pip install "tiders[clickhouse]"

pip install "tiders[iceberg]"

Or install everything at once:

pip install "tiders[all]"

Python SDK

To create pipelines scripts in python, install Tiders as libraries. Tiders is published to PyPI as two packages:

tiders— the Python pipeline SDKtiders-core— pre-built Rust bindings (installed automatically as a dependency)

Using pip

pip install tiders tiders-core

Using uv (recommended)

uv pip install tiders tiders-core

Optional dependencies

Depending on your selected writer or transformation engine, you may need additional packages:

| Writer | Extra package |

|---|---|

| DuckDB | duckdb |

| ClickHouse | clickhouse-connect |

| PostgreSQL | postgresql |

| Iceberg | pyiceberg |

| DeltaLake | deltalake |

For transformation steps:

| Step engine | Extra package |

|---|---|

| Polars | polars |

| Pandas | pandas |

| DataFusion | datafusion |

uv pip install tiders[duckdb, polars] tiders-core

No-Code Quick Start

Run a blockchain data pipeline without writing Python — just a YAML config file.

1. Install

pip install "tiders[duckdb]"

2. Create a config file

Create tiders.yaml:

project:

name: erc20_transfers

description: Fetch ERC-20 Transfer events and write to DuckDB.

provider:

kind: rpc

url: "https://mainnet.gateway.tenderly.co" # or change to read from the .env file ${PROVIDER_URL}

contracts:

- name: erc20

address: "0xae78736Cd615f374D3085123A210448E74Fc6393" # rETH contract, we need a erc20 reference to download the ABI.

# An abi: ./erc20.abi.json config will be added after using CLI command `tiders abi` in this folder

writer:

kind: duckdb

config:

path: data/transfers.duckdb

# Optional: resume from the last written block if the pipeline is interrupted

# checkpoint:

# table: transfers

query:

kind: evm

from_block: 18000000

to_block: 18000100

logs:

- topic0: erc20.Events.Transfer.topic0

fields:

log: [address, topic0, topic1, topic2, topic3, data, block_number, transaction_hash, log_index]

steps:

- kind: evm_decode_events

config:

event_signature: erc20.Events.Transfer.signature

output_table: transfers

allow_decode_fail: true

hstack: true

- kind: cast_by_type

config:

from_type: "decimal256(76,0)"

to_type: "decimal128(38,0)"

allow_cast_fail: true

- kind: hex_encode

3. Environment Variables

Use ${VAR_NAME} placeholders anywhere in the YAML to keep secrets and environment-specific values out of your config file. This works for any string field — provider URLs, credentials, file paths, etc.

provider:

kind: rpc

url: ${PROVIDER_URL}

bearer_token: ${PROVIDER_BEARER_TOKEN}

At startup, the CLI automatically loads a .env file from the same directory as the config file, then substitutes all ${VAR_NAME} placeholders with their values. If a variable is referenced in the YAML but not defined, the CLI raises an error.

Create a .env file alongside your config:

PROVIDER_URL=https://mainnet.gateway.tenderly.co

PROVIDER_BEARER_TOKEN=12345678

You can also point to a different .env file using the --env-file flag:

tiders start --env-file /path/to/.env tiders.yaml

4. Download ABIs

Tiders CLI provides a command to make it easy to download ABIs defined in the YAML file and save them in the folder.

tiders abi

5. Run

tiders start

The CLI auto-discovers tiders.yaml in the current directory. However, you can also pass a path explicitly:

tiders start path/tiders.yaml

5. Generate a Python script (optional)

Once your YAML pipeline is working, you can generate an equivalent Python script using tiders codegen:

tiders codegen

This reads the same YAML file and outputs a standalone Python script that constructs and runs the same pipeline using the tiders Python SDK. By default, the output file is named after the project in snake_case (e.g. erc20_transfers.py). You can specify a custom output path with -o:

tiders codegen -o my_pipeline.py

This is useful when you want to move beyond YAML and customize the pipeline logic in Python — for example, adding custom transformation steps, conditional logic, or integrating with other libraries.

Next steps

- CLI Overview — CLI commands, flags, env var substitution, config auto-discovery

- CLI YAML Reference — full reference for all YAML sections

- rETH Transfer Example — complete annotated example

Your First Pipeline

This tutorial builds a pipeline that fetches ERC-20 transfer events from Ethereum and writes them to DuckDB.

Pipeline Anatomy

Every tiders pipeline has these parts:

- Contracts — optional, helper for contract information

- Provider — where to fetch data from

- Writer — where to write the output

- Checkpoint — optional, resume from the last written block

- Query — what data to fetch

- Steps — transformations to apply

Step 1: Define the Contracts

Contracts is an optional module that makes it easier to get contract information, such as Events, Functions and their params.

Use evm_abi_events and evm_abi_functions from tiders_core. These functions take a JSON ABI string and return a list[EvmAbiEvent] / list[EvmAbiFunction] with the fields described above.

from pathlib import Path

from tiders_core import evm_abi_events, evm_abi_functions

erc20_address = '0xae78736Cd615f374D3085123A210448E74Fc6393' # rETH token contract

erc20_abi_path = Path('/home/yulesa/repos/tiders/examples/first_pipeline/erc20.abi.json')

erc20_abi_json = erc20_abi_path.read_text()

# Build a dict of events keyed by name, e.g. erc20_events["Transfer"]["topic0"]

erc20_events = {

ev.name: {

'topic0': ev.topic0,

'signature': ev.signature,

'name_snake_case': ev.name_snake_case,

'selector_signature': ev.selector_signature,

}

for ev in evm_abi_events(erc20_abi_json)}

# Build a dict of functions keyed by name, e.g. erc20_functions["approve"]["selector"]

erc20_functions = {

fn.name: {

'selector': fn.selector,

'signature': fn.signature,

'name_snake_case': fn.name_snake_case,

'selector_signature': fn.selector_signature,

}

for fn in evm_abi_functions(erc20_abi_json)}

Step 2: Define the Provider

from tiders_core.ingest import ProviderConfig, ProviderKind

provider = ProviderConfig(

kind=ProviderKind.RPC,

url='https://mainnet.gateway.tenderly.co',

)

Available providers: HYPERSYNC, SQD, RPC.

Step 3: Configure the Writer

The writer defines where transformed data is stored. DuckDB creates a local database file. Other options include ClickHouse, Delta Lake, Iceberg, PostgreSQL, PyArrow Dataset (Parquet), and CSV.

from tiders.config import DuckdbWriterConfig, Writer, WriterKind

writer = Writer(

kind=WriterKind.DUCKDB,

config=DuckdbWriterConfig(path='data/transfers.duckdb'),

)

Step 4: Configure the Checkpoint (optional)

The checkpoint lets the pipeline resume from where it left off after an interruption. At startup, tiders reads MAX(column) from the specified table and advances from_block to that value plus one. If the table is empty or does not exist, the configured from_block is used unchanged.

from tiders.config import CheckpointConfig

checkpoint = CheckpointConfig(

table="transfers", # table to read the max block from

column="block_number", # default, can be omitted

writer_index=0, # default, can be omitted

)

Step 5: Define the Query

The query defines what data to fetch: block range, filters, and fields.

from tiders_core.ingest import Query, QueryKind

from tiders_core.ingest import evm

query = Query(

kind=QueryKind.EVM,

params=evm.Query(

from_block=18000000,

to_block=18000100,

logs=[evm.LogRequest(topic0=[erc20_events["Transfer"]["topic0"]])],

fields=evm.Fields(

log=evm.LogFields(

log_index=True,

transaction_hash=True,

block_number=True,

address=True,

data=True,

topic0=True,

topic1=True,

topic2=True,

topic3=True,

),

),

),

)

Step 6: Add Transformation Steps

Steps are transformations applied to the raw data before writing. They run in order, each step’s output feeding into the next.

STEP 1 - EVM_DECODE_EVENTS:

Decodes the raw log data (topic1..3 + data) into named columns using the event signature.

- allow_decode_fail: if True, rows that fail to decode are kept (with nulls)

- hstack: if False, outputs only decoded columns; if True, append them to the original raw log columns

STEP 2 - HEX_ENCODE:

Casts all columns matching a source PyArrow type to a target type. Here it downcasts Decimal256 (the EVM uint256 wire type) to Decimal128(38,0) for DuckDB compatibility. With allow_cast_fail=True, values that overflow become null instead of raising an error.

STEP 3 - HEX_ENCODE:

Converts binary columns (addresses, hashes) to hex strings, making them human-readable and compatible with databases like DuckDB.

import pyarrow as pa

from tiders.config import CastByTypeConfig, EvmDecodeEventsConfig, HexEncodeConfig, Step, StepKind

steps = [

Step(

kind=StepKind.EVM_DECODE_EVENTS,

config=EvmDecodeEventsConfig(

event_signature="Transfer(address indexed from, address indexed to, uint256 amount)",

output_table="transfers",

allow_decode_fail=True,

hstack=True,

),

),

# Downcast Decimal256 (EVM uint256) to Decimal128 for DuckDB compatibility

Step(

kind=StepKind.CAST_BY_TYPE,

config=CastByTypeConfig(

from_type=pa.decimal256(76, 0),

to_type=pa.decimal128(38, 0),

allow_cast_fail=True,

),

),

Step(

kind=StepKind.HEX_ENCODE,

config=HexEncodeConfig(),

),

]

Step 7: Run the Pipeline

The Pipeline ties all parts together. run_pipeline() executes the full ingestion: fetch → transform → write.

import asyncio

from tiders import run_pipeline

from tiders.config import Pipeline

pipeline = Pipeline(

provider=provider,

writer=writer,

checkpoint=checkpoint, # optional

query=query,

steps=steps,

)

asyncio.run(run_pipeline(pipeline=pipeline))

Verify the Output

Verify the output by querying the DuckDB file using duckdb-cli:

duckdb data/transfers.db

SHOW TABLES;

SELECT * FROM transfers LIMIT 5;

Next Steps

- Learn about all available providers

- See the full list of transformation steps

- Explore more examples

Choosing a Database

tiders can write data to several backends. This guide helps you pick the right one and get it running.

Which Database Should I Use?

| Database | Good for | Setup difficulty |

|---|---|---|

| DuckDB | Getting started, local analysis, prototyping | None — runs in-process |

| PostgreSQL | Relational queries, joining with existing app data | Easy with Docker |

| ClickHouse | Fast analytics on large datasets, aggregations | Easy with Docker |

| Parquet files | File-based storage, sharing data, data lakes | None — writes to disk |

| CSV | Quick export, spreadsheets, simple interoperability | None — writes to disk |

| Iceberg / Delta Lake | Production data lakes with ACID transactions | Moderate — requires catalog or storage setup |

Just getting started? Use DuckDB. It requires no external services — the Your First Pipeline tutorial uses it.

Need a production database? Read on to set up PostgreSQL or ClickHouse with Docker.

DuckDB

DuckDB runs inside your Python process. No server, no Docker, no configuration.

Install

Install tiders with the DuckDB extra dependency.

pip install "tiders[duckdb]"

Querying your data in DuckDB

Open the database file directly using duckdb CLI:

duckdb data/output.duckdb

A few SQL commands to explore:

-- List all tables

SHOW TABLES;

-- Preview data

SELECT * FROM transfers LIMIT 10;

-- Count rows

SELECT count(*) FROM transfers;

-- Exit

.quit

PostgreSQL with Docker

PostgreSQL is a battle-tested relational database going back to 1996. As a row-oriented store, it underperforms in heavy analytical workloads compared to columnar databases like ClickHouse. Use it when you need to read data straight from a pipeline without post-ingestion transformation, or when you want to connect your pipeline data to an existing PostgreSQL instance.

Starting PostgreSQL with Docker

Tiders provides a ready-made Docker Compose file in the tiders/docker_postgres/ folder. Copy this file or paste its contents into your own docker-compose.yaml.

Copy the environment file and edit as needed:

cp .env.example .env

Start the container:

docker compose up -d

When you’re done, stop the database containers:

# Stop the container (data is preserved in the volume)

docker compose down

# Stop and delete all data

docker compose down -v

Install tiders with the PostgreSQL extra dependency.

pip install "tiders[postgresql]"

Querying your data in PostgreSQL with psql

psql is the interactive terminal for PostgreSQL. You can access it through your Docker container:

# Connect via Docker

docker exec -it pg_database psql -U postgres -d tiders

# Or if you have psql installed locally

psql -U postgres -d tiders -h localhost -p 5432

Common psql commands:

| Command | Description |

|---|---|

\l | List all databases |

\dt | List tables in the current database |

\d transfers | Describe a table’s columns and types |

\c dbname | Switch to a different database |

\? | Show all meta-commands |

\q | Exit psql |

Try some queries:

-- Preview your data

SELECT * FROM transfers LIMIT 10;

-- Count rows

SELECT count(*) FROM transfers;

-- Check the PostgreSQL version

SELECT version();

ClickHouse with Docker

ClickHouse is a columnar database built for analytics. It excels at aggregating millions of rows quickly — ideal for blockchain data analysis.

Starting ClickHouse with Docker

Tiders provides a ready-made Docker Compose file in the tiders/docker_clickhouse/ folder. Copy this file or paste its contents into your own docker-compose.yaml.

Copy the environment file and edit as needed:

cp .env.example .env

Start the container:

docker compose up -d

Install tiders with the ClickHouse extra dependency.

pip install "tiders[clickhouse]"

When you’re done, stop the database containers:

# Stop the container (data is preserved in the volume)

docker compose down

# Stop and delete all data

docker compose down -v

Querying your data in ClickHouse

clickhouse-client is the interactive terminal for ClickHouse. Access it through your Docker container:

# Connect via Docker

docker exec -it clickhouse-server clickhouse-client --user default --password secret --database tiders

# Or if you have clickhouse-client installed locally

clickhouse-client --host localhost --port 9000 --user default --password secret --database tiders

You can also use the ClickHouse Web SQL UI at http://localhost:8123/play (assuming default host and port).

Try some queries:

-- List all databases

SHOW DATABASES;

-- List tables in the current database

SHOW TABLES;

-- Show a table's columns and types

DESCRIBE tiders.transfers;

-- Set the default database (so you don't have to prefix with `tiders.`)

USE tiders;

-- Preview your data

SELECT * FROM transfers LIMIT 10;

-- Count rows

SELECT count() FROM transfers;

-- Exit the client (type `exit` or press Ctrl+D)

Parquet Files

Parquet is a column-oriented, binary file (not human-readable) format that offers significantly smaller file sizes, faster query performance, and built-in schema metadata compared to CSV (human-readable). Use it when you want file-based storage without running a database.

Parquet Visualizer is a VS Code extension that lets you browse and run SQL against Parquet files directly in the editor.

You can also read Parquet files with DuckDB, Pandas, Polars, or any tool that supports the format:

# With DuckDB (no server needed)

import duckdb

duckdb.sql("SELECT * FROM 'data/output/transfers/*.parquet' LIMIT 10").show()

# With Pandas

import pandas as pd

df = pd.read_parquet("data/output/transfers/")

# With Polars

import polars as pl

df = pl.read_parquet("data/output/transfers/")

Next Steps

- See the full Writers reference for all configuration options

- Build your first pipeline with the Your First Pipeline tutorial

- Explore examples for complete working pipelines

Development Setup

To develop locally across all repos, clone all three projects side by side:

git clone https://github.com/yulesa/tiders.git

git clone https://github.com/yulesa/tiders-core.git

git clone https://github.com/yulesa/tiders-rpc-client.git

Installing tiders from the local repo

If you want to modify tiders and test your changes without publishing to PyPI, install it in editable mode directly from your local clone:

cd tiders

pip install -e ".[all]"

# If using uv

uv pip install -e ".[all]"

The [all] extra installs every optional dependency (DuckDB, ClickHouse, Delta Lake, etc.). You can also install only the extras you need, e.g. ".[duckdb,cli]".

Building tiders-core and tiders-rpc-client from source

If you’re modifying tiders-rpc-client repo locally, you probably want tiders-core to build against your local version.

Build tiders-rpc-client locally:

cd tiders-rpc-client/rust

cargo build

Use local tiders-rpc-clientto build tiders-core, overriding the crates.io version:

cd tiders-core

# Build Rust crates with local tiders-rpc-client

cargo build --config 'patch.crates-io.tiders-rpc-client.path="../tiders-rpc-client/rust"'

# Build Python bindings with the same patch

cd python

maturin develop --config 'patch.crates-io.tiders-rpc-client.path="../../tiders-rpc-client/rust"'

# If using uv

maturin develop --uv --config 'patch.crates-io.tiders-rpc-client.path="../../tiders-rpc-client/rust"'

If you’re modifying tiders-core repo locally, you probably want tiders to use your local tiders-core version.

Build tiders-core as described above, or just cargo build if you haven’t modified tiders-rpc-client.

Persistent local development

For persistent local development, you can put this in tiders-core/Cargo.toml:

[patch.crates-io]

tiders-rpc-client = { path = "../tiders-rpc-client/rust" }

This avoids passing --config on every build command.

Configure tiders to use your local tiders-core Python package:

[tool.uv.sources]

tiders-core = { path = "../tiders-core/python", editable = true }

cd tiders

uv sync

Tiders Overview (Python SDK)

The tiders Python package is the primary user-facing interface for building blockchain data pipelines.

Two ways to use tiders

| Mode | How | When to use |

|---|---|---|

| Python SDK | Write a Python script, import tiders | Full control, custom logic, complex pipelines |

| CLI (No-Code) | Write a YAML config, run tiders start | Quick setup, no Python required, standard pipelines |

Both modes share the same pipeline engine. The CLI parses a YAML config into the same Python objects and calls the same run_pipeline() function.

Installation

pip install tiders tiders-core

For the CLI (no-code mode):

pip install "tiders[cli]"

Core Concepts

A pipeline is built from four components:

| Component | Description |

|---|---|

ProviderConfig | Data source (HyperSync, SQD, or RPC) |

Query | What data to fetch (block range, filters, field selection) |

Step | Transformations to apply (decode, cast, encode, custom) |

Writer | Output destination (DuckDB, ClickHouse, Iceberg, DeltaLake, Parquet) |

Basic Usage

Python

import asyncio

from tiders import config as cc, run_pipeline

from tiders_core import ingest

pipeline = cc.Pipeline(

provider=ingest.ProviderConfig(kind=ingest.ProviderKind.HYPERSYNC, url="https://eth.hypersync.xyz"),

query=query, # see Query docs

writer=writer, # see Writers docs

steps=[...], # see Steps docs

)

asyncio.run(run_pipeline(pipeline=pipeline))

yaml

provider:

kind: hypersync

url: ${PROVIDER_URL}

writer:

kind: duckdb

config:

path: data/output.duckdb

query:

kind: evm

from_block: 18000000

steps: [...]

tiders start config.yaml

Module Structure

tiders

├── config # Pipeline, Step, Writer configuration classes

├── pipeline # run_pipeline() entry point

├── cli/ # CLI entry point and YAML parser

├── writers/ # Output adapters (DuckDB, ClickHouse, Iceberg, etc.)

└── utils # Utility functions

Performance Model

tiders parallelizes three phases automatically:

- Ingestion — fetching data from the provider (async, concurrent)

- Processing — running transformation steps on each batch

- Writing — inserting into the output store

The next batch is being fetched while the current batch is being processed and the previous batch is being written.

CLI Overview (No-Code Mode)

The tiders CLI lets you run a complete blockchain data pipeline from a single YAML config file — no Python required.

tiders start config.yaml

How it works

The CLI maps 1:1 to the Python SDK — it parses the YAML into the same Python objects and calls the same run_pipeline() function:

- Parse — load the YAML file and substitute

${ENV_VAR}placeholders - Build — construct

ProviderConfig,Query,Steps, andWriterfrom the config sections - Run — call

run_pipeline(), identical to Python-mode execution

Commands

tiders start

Run a pipeline from a YAML config file.

tiders start [CONFIG_PATH] [OPTIONS]

Arguments:

CONFIG_PATH Path to the YAML config file (optional, default to use the YAML files in the folder)

Options:

--from-block INTEGER Override the starting block number from the config

--to-block INTEGER Override the ending block number from the config

--env-file PATH Path to a .env file (overrides default discovery)

--help Show this message and exit

--version Show the tiders version and exit

tiders codegen

Generate a standalone Python script from a YAML config file. The generated script constructs and runs the same pipeline using the tiders Python SDK — useful as a starting point when you need to customize beyond what YAML supports.

tiders codegen [CONFIG_PATH] [OPTIONS]

Arguments:

CONFIG_PATH Path to the YAML config file (optional, same discovery rules as start)

Options:

-o, --output PATH Output file path (defaults to <ProjectName>.py in the current directory)

--env-file PATH Path to a .env file (overrides default discovery)

--help Show this message and exit

The output filename is derived from the project.name field in the YAML, converted to snake_case (e.g. ERC20 Transfers becomes erc20_transfers.py).

Environment variables referenced in the YAML (e.g. ${PROVIDER_URL}) are emitted as os.environ.get("PROVIDER_URL") calls in the generated script, so secrets stay out of the code.

tiders abi

Fetch contract ABIs from Sourcify or Etherscan and save them as JSON files.

tiders abi [OPTIONS]

Options:

--address TEXT Contract address (single-address mode)

--chain-id TEXT Chain ID or name (default: 1). See supported chains below

--yaml-path PATH Path to YAML file with contract declarations

-o, --output PATH Output path. Single-address mode: file path. YAML mode: directory

--source [sourcify|etherscan] ABI source to try first (default: sourcify). Falls back to the other

--env-file PATH Path to a .env file (overrides default discovery)

--help Show this message and exit

Usage modes

1. Single address — fetch one ABI by contract address:

tiders abi --address 0xae78736Cd615f374D3085123A210448E74Fc6393

tiders abi --address 0xae78736Cd615f374D3085123A210448E74Fc6393 --chain-id base

2. From YAML file — fetch ABIs for all contracts declared in the YAML:

tiders abi --yaml-path pipeline.yaml #(optional, autodiscobery in current directory)

The --chain-id option in CLI or in the YAML config accept either a numeric chain ID or a chain name in some chains:

| Name | Chain ID |

|---|---|

ethereum, mainnet, ethereum-mainnet | 1 |

bnb | 56 |

base | 8453 |

arbitrum | 42161 |

polygon | 137 |

scroll | 534352 |

unichain | 130 |

Set ETHERSCAN_API_KEY in your environment or via .env file. Etherscan is skipped with a warning if not set.

Environment variables

Secrets and dynamic values are kept out of the YAML using ${VAR} placeholders:

provider:

kind: hypersync

url: ${PROVIDER_URL}

bearer_token: ${HYPERSYNC_BEARER_TOKEN}

The CLI automatically loads a .env file from the same directory as the config file before substitution. Use --env-file to point to a different location:

tiders start --env-file /path/to/.env config.yaml

An error is raised if any ${VAR} placeholder remains unresolved after substitution.

See the CLI YAML Reference for full details on all sections.

CLI YAML Reference

A tiders YAML config has seven top-level sections:

project: # pipeline metadata (required)

provider: # data source (required)

contracts: # ABI + address helpers (optional)

writer: # where to write output (required)

checkpoint: # resume from last written block (optional)

query: # what data to fetch (required)

steps: # transformation pipeline (optional)

table_aliases: # rename default table names (optional)

project

project:

name: my_pipeline # project name

description: My description. # project description

repository: https://github.com/yulesa/tiders # optional — informative only

environment_path: "../../.env" # optional — allows to override the .env file path

provider

provider:

kind: hypersync # hypersync | sqd | rpc

url: ${PROVIDER_URL}

bearer_token: ${TOKEN} # HyperSync only, optional

See Providers for full details.

contracts

Optional list of contracts. If a ABI path is defined, Tiders reads the events and functions signatures. Addresses, signatures, topic0 and ABI-derived values can be referenced by name anywhere in provider: or query:.

contracts:

- name: MyToken

address: "0xabc123..."

abi: ./MyToken.abi.json

chain_id: ethereum # numeric chain ID or a chain name for some chains

Reference syntax:

| Reference | Resolves to |

|---|---|

MyToken.address | The contract address string |

MyToken.Events.Transfer.topic0 | Keccak-256 hash of the event signature |

MyToken.Events.Transfer.signature | Full event signature string |

MyToken.Functions.transfer.selector | 4-byte function selector |

MyToken.Functions.transfer.signature | Full function signature string |

writer

See Writers for full details.

writer accepts either a single writer mapping or a list of writer mappings to write to multiple backends in parallel:

writer:

- kind: duckdb

config:

path: data/output.duckdb

- kind: csv

config:

base_dir: data/output

DuckDB

writer:

kind: duckdb

config:

path: data/output.duckdb # path to create or connect to a duckdb database

ClickHouse

writer:

kind: clickhouse

config:

host: localhost # ClickHouse server hostname

port: 8123 # ClickHouse HTTP port

username: default # ClickHouse username

password: ${CH_PASSWORD} # ClickHouse password

database: default # ClickHouse database name

secure: false # optional — use TLS, default: false

codec: LZ4 # optional — default compression codec for all columns

order_by: # optional — per-table ORDER BY columns

transfers: [block_number, log_index]

engine: MergeTree() # optional — ClickHouse table engine, default: MergeTree()

anchor_table: transfers # optional — table written last, for ordering guarantees

create_tables: true # optional — auto-create tables on first insert, default: true

Delta Lake

writer:

kind: delta_lake

config:

data_uri: s3://my-bucket/delta/ # base URI where Delta tables are stored

partition_by: [block_number] # optional — columns used for partitioning

storage_options: # optional — cloud storage credentials/options

AWS_REGION: us-east-1

AWS_ACCESS_KEY_ID: ${AWS_KEY}

anchor_table: transfers # optional — table written last, for ordering guarantees

Iceberg

writer:

kind: iceberg

config:

namespace: my_namespace # Iceberg namespace (database) to write tables into

catalog_uri: sqlite:///catalog.db # URI for the Iceberg catalog (e.g. sqlite or jdbc)

warehouse: s3://my-bucket/iceberg/ # warehouse root URI for the catalog

catalog_type: sql # catalog type (e.g. sql, rest, hive)

write_location: s3://my-bucket/iceberg/ # storage URI where Iceberg data files are written

PyArrow Dataset (Parquet)

writer:

kind: pyarrow_dataset

config:

base_dir: data/output # root directory for all output datasets

anchor_table: transfers # optional — table written last, for ordering guarantees

partitioning: [block_number] # optional — columns or Partitioning object per table

partitioning_flavor: hive # optional — partitioning flavor (e.g. hive)

max_rows_per_file: 1000000 # optional — max rows per output file, default: 0 (unlimited)

create_dir: true # optional — create output directory if missing, default: true

CSV

writer:

kind: csv

config:

base_dir: data/output # required — root directory for all output CSV files

delimiter: "," # optional, default: ","

include_header: true # optional, default: true

create_dir: true # optional — create output directory if missing, default: true

anchor_table: transfers # optional — table written last, for ordering guarantees

PostgreSQL

writer:

kind: postgresql

config:

host: localhost # required — PostgreSQL server hostname

dbname: postgres # optional, default: postgres

port: 5432 # optional, default: 5432

user: postgres # optional, default: postgres

password: ${PG_PASSWORD} # optional, default: postgres

schema: public # optional — PostgreSQL schema (namespace), default: public

create_tables: true # optional — auto-create tables on first push, default: true

anchor_table: transfers # optional — table written last, for ordering guarantees

checkpoint

The checkpoint tells the pipeline where to resume from after an interruption. At startup, tiders reads MAX(column) from table using the configured writer and sets query.from_block to that value plus one. If the table is empty or does not exist, from_block is left unchanged.

checkpoint:

table: transfers # required — table to read the max block from

column: block_number # optional — block-number column, default: "block_number"

writer_index: 0 # optional — index into the writers list, default: 0

| Field | Type | Default | Description |

|---|---|---|---|

table | string | — | Name of the destination table to query |

column | string | "block_number" | Column holding the block number |

writer_index | int | 0 | Index of the writer to read from (for multi-writer pipelines) |

query

The query defines what blockchain data to fetch: the block range, which tables to include, what filters to apply, and which fields to select.

See Query for full details on EVM and SVM query options, field selection, and request filters.

EVM

query:

kind: evm

from_block: 18000000

to_block: 18001000 # optional

include_all_blocks: false # optional

fields:

log: [address, topic0, topic1, topic2, topic3, data, block_number, transaction_hash, log_index]

block: [number, timestamp]

transaction: [hash, from, to, value]

trace: [action_from, action_to, action_value]

logs:

- topic0: "Transfer(address,address,uint256)" # signature or 0x hex

address: "0xabc..."

include_blocks: true

transactions:

- from: ["0xabc..."]

include_blocks: true

traces:

- action_from: ["0xabc..."]

SVM

query:

kind: svm

from_block: 330000000

to_block: 330001000

include_all_blocks: true

fields:

instruction: [block_slot, program_id, data, accounts]

transaction: [signature, fee]

block: [slot, timestamp]

instructions:

- program_id: ["JUP6LkbZbjS1jKKwapdHNy74zcZ3tLUZoi5QNyVTaV4"]

include_transactions: true

transactions:

- signer: ["0xabc..."]

logs:

- kind: [program, system_program]

balances:

- account: ["0xabc..."]

token_balances:

- mint: ["..."]

rewards:

- pubkey: ["..."]

steps

Steps are transformations applied to each batch of data before writing. They run in order and can decode, cast, encode, join, or apply custom logic.

See Steps for full details on each step kind.

evm_decode_events

Decode EVM log events using an ABI signature

- kind: evm_decode_events

config:

event_signature: "Transfer(address indexed from, address indexed to, uint256 amount)"

output_table: transfers # optional — name of the output table for decoded results, default: "decoded_logs"

input_table: logs # optional — name of the input table to decode, default: "logs"

allow_decode_fail: true # optional — when True rows that fails are nulls values instead of raising an error, default: False

filter_by_topic0: false # optional — when True only rows whose ``topic0`` matches the event topic0 are decoded, default: False

hstack: true # optional — when True decoded columns are horizontally stacked with the input columns, default: True

svm_decode_instructions

Decode Solana program instructions

- kind: svm_decode_instructions

config:

instruction_signature:

discriminator: "0xe517cb977ae3ad2a" # The instruction discriminator bytes used to identify the instruction type.

params: # The list of typed parameters to decode from the instruction data (after the discriminator).

- name: amount

type: u64

- name: data

type: { type: array, element: u8 }

accounts_names: [tokenAccountIn, tokenAccountOut] # Names assigned to positional accounts in the instruction.

allow_decode_fail: false # optional — when True, rows that fails are nulls values instead of raising an error, default: False

filter_by_discriminator: false # optional — when True, only rows whose data starting bytes matches the event topic0 are decoded, default: False

input_table: instructions # optional — name of the input table to decode, default: "instructions"

output_table: decoded_instructions # optional — name of the input table to decode, default: "decoded_instructions"

hstack: true # optional — when True, decoded columns are horizontally stacked with the input columns, default: True

svm_decode_logs

Decode Solana program logs

- kind: svm_decode_logs

config:

log_signature: # The list of typed parameters to decode from the log data.

params:

- name: amount_in

type: u64

- name: amount_out

type: u64

allow_decode_fail: false # optional — when True rows that fails are nulls values instead of raising an error, default: False

input_table: logs # optional — name of the input table to decode, default: "logs"

output_table: decoded_logs # optional — name of the input table to decode, default: "decoded_logs"

hstack: true # optional — when True decoded columns are horizontally stacked with the input columns, default: True

cast_by_type

- kind: cast_by_type

config:

from_type: "decimal256(76,0)" # The source pyarrow.DataType to match.

to_type: "decimal128(38,0)" # The target pyarrow.DataType to cast

allow_cast_fail: true # optional — when True, values that cannot be cast are set to null instead of raising an error, default: False

Supported type strings: int8–int64, uint8–uint64, float16–float64, string, utf8, large_string, binary, large_binary, bool, date32, date64, null, decimal128(p,s), decimal256(p,s).

cast

Cast all columns of one type to another

- kind: cast

config:

table_name: transfers # The name of the table whose columns should be cast.

mappings: # A mapping of column name to target pyarrow.DataType

amount: "decimal128(38,0)"

block_number: "int64"

allow_cast_fail: false # optional — When True, values that cannot be cast are set to null instead of raising an error, default: False

Supported type strings: int8–int64, uint8–uint64, float16–float64, string, utf8, large_string, binary, large_binary, bool, date32, date64, null, decimal128(p,s), decimal256(p,s).

hex_encode

Hex-encode all binary columns

- kind: hex_encode

config:

tables: [transfers] # optional — list of table names to process. When ``None``, all tables in the data dictionary are processed, default: None

prefixed: true # optional — When True, output strings are "0x"-prefixed, default: True

base58_encode

Base58-encode all binary columns

- kind: base58_encode

config:

tables: [instructions] # optional — list of table names to process. When ``None``, all tables in the data dictionary are processed, default: None

join_block_data

Join block fields into other tables (left outer join). Column collisions are prefixed with <block_table_name>_.

- kind: join_block_data

config:

tables: [logs] # optional — tables to join into; default: all tables except the block table

block_table_name: blocks # optional, default: "blocks"

join_left_on: [block_number] # optional, default: ["block_number"]

join_blocks_on: [number] # optional, default: ["number"]

join_evm_transaction_data

Join EVM transaction fields into other tables (left outer join). Column collisions are prefixed with <tx_table_name>_.

- kind: join_evm_transaction_data

config:

tables: [logs] # optional — tables to join into; default: all except the transactions table

tx_table_name: transactions # optional, default: "transactions"

join_left_on: [block_number, transaction_index] # optional, default: ["block_number", "transaction_index"]

join_transactions_on: [block_number, transaction_index] # optional, default: ["block_number", "transaction_index"]

join_svm_transaction_data

Join SVM transaction fields into other tables (left outer join). Column collisions are prefixed with <tx_table_name>_.

- kind: join_svm_transaction_data

config:

tables: [instructions] # optional — tables to join into; default: all except the transactions table

tx_table_name: transactions # optional, default: "transactions"

join_left_on: [block_slot, transaction_index] # optional, default: ["block_slot", "transaction_index"]

join_transactions_on: [block_slot, transaction_index] # optional, default: ["block_slot", "transaction_index"]

set_chain_id

Add a chain_id column

- kind: set_chain_id

config:

chain_id: 1 # The chain identifier to set (e.g. 1 for Ethereum mainnet).

delete_tables

Remove whole tables from the current pipeline data.

- kind: delete_tables

config:

tables:

- logs

- traces

delete_columns

Drop selected columns from one or more tables.

- kind: delete_columns

config:

tables:

transfers:

- raw_data

- topic3

rename_tables

Rename top-level tables in the current pipeline data.

- kind: rename_tables

config:

mappings:

decoded_logs: transfers # required

rename_columns

Rename columns in one or more tables.

- kind: rename_columns

config:

tables:

transfers:

from: sender

to: receiver

select_tables

Keep only the listed tables and drop the rest.

- kind: select_tables

config:

tables:

- transfers

- blocks

select_columns

Keep only the listed columns for each configured table.

- kind: select_columns

config:

tables:

transfers:

- block_number

- from

- to

- amount

reorder_columns

Move configured columns to the front of each table. Unlisted columns stay after them in their original order.

- kind: reorder_columns

config:

tables:

transfers:

- block_number

- transaction_index

- log_index

add_columns

Add constant-value columns to one or more tables. Existing columns with the same name are replaced.

- kind: add_columns

config:

tables:

transfers:

protocol: erc20

is_transfer: true

copy_columns

Copy existing columns to new names.

- kind: copy_columns

config:

tables:

transfers:

from: sender

to: receiver

prefix_columns

Add the same prefix to selected columns.

- kind: prefix_columns

config:

prefix: tx_ # required

tables:

transfers:

- hash

- from

- to

suffix_columns

Add the same suffix to selected columns.

- kind: suffix_columns

config:

suffix: _raw # required

tables:

transfers:

- data

- topic0

prefix_tables

Add the same prefix to selected table names.

- kind: prefix_tables

config:

prefix: raw_ # required

tables:

- logs

- transactions

suffix_tables

Add the same suffix to selected table names.

- kind: suffix_tables

config:

suffix: _decoded # required

tables:

- instructions

- logs

drop_empty_tables

Remove empty tables from the current pipeline data.

- kind: drop_empty_tables

config:

tables: # optional — when omitted, all tables are checked

- logs

- traces

This step also accepts an empty config to check every table:

- kind: drop_empty_tables

config: {}

sql

Run one or more DataFusion SQL queries. CREATE TABLE name AS SELECT ... stores results under name; plain SELECT stores as sql_result.

- kind: sql

config:

queries:

- >

CREATE TABLE enriched AS

SELECT t.*, b.timestamp

FROM transfers t

JOIN blocks b ON b.number = t.block_number

python_file

Load a custom step function from an external Python file. Paths are relative to the YAML config directory.

- kind: python_file

name: my_custom_step

config:

file: ./steps/my_step.py

function: transform # callable name in the file

step_type: datafusion # datafusion (default), polars, or pandas

context: # optional — passed as ctx to the function

threshold: 100

table_aliases

Rename the default ingestion table names.

EVM

table_aliases:

blocks: my_blocks # optional — name for the blocks response, default: "blocks"

transactions: my_txs # optional — name for the transactions response, default: "transactions"

logs: my_logs # optional — name for the logs response, default: "logs"

traces: my_traces # optional — name for the traces response, default: "traces"

SVM

table_aliases:

instructions: my_instructions # optional — name for the instructions response, default: "instructions"

transactions: my_txs # optional — name for the transactions response, default: "transactions"

logs: my_logs # optional — name for the logs response, default: "logs"

balances: my_balances # optional — name for the balances response, default: "balances"

token_balances: my_token_balances # optional — name for the token_balances response, default: "token_balances"

rewards: my_rewards # optional — name for the rewards response, default: "rewards"

blocks: my_blocks # optional — name for the blocks response, default: "blocks"

Providers

Providers are the data sources that tiders fetches blockchain data from. Each provider connects to a different backend service.

Choosing a Provider

- HyperSync — fast EVM historical data, allow request filtering; requires API key; Paid features

- SQD — fast, supports both EVM and SVM, allow request filtering; decentralized; Paid features

- RPC — works with traditional RPC, don’t allow request filtering; useful when other providers don’t support your chain

Unlike HyperSync and SQD, the RPC provider does not support server-side filtering of transactions or traces. That’s because RPC methods like eth_getBlockByNumber and trace_block return all data in a block with no filtering options. Setting TransactionRequest or TraceRequest with filtering fields (e.g. from_ =0xabc.., to=0xabc..., status=success) will produce an error.

Similarly, field selection does not improve performance with the RPC provider — all fields are always fetched from the node and unselected fields are dropped client-side.

Alternatively, you can ingest all data and filter post-indexing in your tiders pipeline or database instead. See the RPC querying docs for details.

Available Providers

| Provider | EVM (Ethereum) | SVM (Solana) | Description |

|---|---|---|---|

| HyperSync | Yes | No | High-performance indexed data |

| SQD | Yes | Yes | Decentralized data network |

| RPC | Yes | No | Any standard EVM JSON-RPC endpoint |

Configuration

All providers use ProviderConfig from tiders_core.ingest:

from tiders_core.ingest import ProviderConfig, ProviderKind

Common Parameters

These parameters are available for all providers:

| Parameter | Type | Default | Description |

|---|---|---|---|

kind | ProviderKind | — | Provider backend (hypersync, sqd, rpc) |

url | str | None | Provider endpoint URL. If None, uses the provider’s default |

bearer_token | str | None | Authentication token for protected APIs |

stop_on_head | bool | false | If true, stop when reaching the chain head; if false, keep polling indefinitely |

head_poll_interval_millis | int | None | How frequently (ms) to poll for new blocks when streaming live data |

buffer_size | int | None | Number of responses to buffer before sending to the consumer |

max_num_retries | int | None | Maximum number of retries for failed requests |

retry_backoff_ms | int | None | Delay increase between retries in milliseconds |

retry_base_ms | int | None | Base retry delay in milliseconds |

retry_ceiling_ms | int | None | Maximum retry delay in milliseconds |

req_timeout_millis | int | None | Request timeout in milliseconds |

RPC-only Parameters

| Parameter | Type | Default | Description |

|---|---|---|---|

batch_size | int | None | Number of blocks fetched per batch |

compute_units_per_second | int | None | Rate limit in compute units per second |

reorg_safe_distance | int | None | Number of blocks behind head considered safe from chain reorganizations |

trace_method | str | None | Trace API method: "trace_block" or "debug_trace_block_by_number" |

HyperSync

Python

provider = ProviderConfig(

kind=ProviderKind.HYPERSYNC,

url="https://eth.hypersync.xyz",

bearer_token = HYPERSYNC_TOKEN

)

yaml

provider:

kind: hypersync

url: ${PROVIDER_URL}

bearer_token: ${HYPERSYNC_BEARER_TOKEN} # optional

SQD

Python

provider = ProviderConfig(

kind=ProviderKind.SQD,

url="https://portal.sqd.dev/datasets/ethereum-mainnet",

)

yaml

provider:

kind: sqd

url: ${PROVIDER_URL}

RPC

Use any standard EVM JSON-RPC endpoint (Alchemy, Infura, QuickNode, local node, etc.):

Python

provider = ProviderConfig(

kind=ProviderKind.RPC,

url="https://eth-mainnet.g.alchemy.com/v2/YOUR_KEY",

)

yaml

provider:

kind: rpc

url: ${PROVIDER_URL}

stop_on_head: true # optional, default: false

trace_method: trace_block # optional — trace_block or debug_trace_block_by_number

The RPC provider uses tiders-rpc-client under the hood, which supports adaptive concurrency, retry logic, and streaming.

Contracts

Contracts is an optional module that makes it easier to get contract information, such as Events, Functions and their params.

When you define a contract, tiders parses the ABI JSON file and extracts all events and functions with their signatures, selectors, and topic hashes — so you don’t have to compute or hard-code them yourself.

Event/Functions Fields

Each event/function parsed from the ABI exposes:

| Field | Type | Description |

|---|---|---|

name | str | Event/Function name (e.g. "Swap") |

name_snake_case | str | Event/Function name in snake_case (e.g. "matched_orders") |

signature | str | Human-readable signature with types, names and indexed markers (events) (e.g. "Swap(address indexed sender, address indexed recipient, int256 amount0)") |

selector_signature | str | Canonical signature without names (e.g. "Swap(address,address,int256)") |

topic0 | str | (Event only) Keccak-256 hash of the selector signature, as 0x-prefixed hex |

selector | str | (Function-only) 4-byte function selector as 0x-prefixed hex |

YAML Usage

In YAML configs, define contracts under the contracts: key. Tiders automatically parses the ABI and makes all values available via reference syntax.

contracts:

- name: MyToken

address: "0xae78736Cd615f374D3085123A210448E74Fc6393"

abi: ./MyToken.abi.json # abi path

chain_id: ethereum # numeric chain ID or a chain name in some chains (used to download the ABI in the CLI command `tiders abi`)

Reference Syntax

Once a contract is defined, tiders parse will automatically extract ABI information, so you can reference its address, events, and functions by name anywhere in query: or steps: sections:

| Reference | Resolves to |

|---|---|

MyToken.address | The contract address string |

MyToken.Events.Transfer.name | Event name |

MyToken.Events.Transfer.topic0 | Keccak-256 hash of the event signature |

MyToken.Events.Transfer.signature | Full event signature string |

MyToken.Events.Transfer.name_snake_case | Event name in snake_case |

MyToken.Events.Transfer.selector_signature | Canonical event signature without names |

MyToken.Functions.transfer.selector | 4-byte function selector |

MyToken.Functions.transfer.signature | Full function signature string |

MyToken.Functions.transfer.name_snake_case | Function name in snake_case |

MyToken.Functions.transfer.selector_signature | Canonical function signature without names |

Python Usage

Use evm_abi_events and evm_abi_functions from tiders_core. These functions take a JSON ABI string and return a list[EvmAbiEvent] / list[EvmAbiFunction] with the fields described above.

from pathlib import Path

from tiders_core import evm_abi_events, evm_abi_functions

# Contract address

my_token_address = "0xae78736Cd615f374D3085123A210448E74Fc6393"

# Load ABI

abi_path = Path("./MyToken.abi.json")

abi_json = abi_path.read_text()

# Parse events — dict keyed by event name

events = {

ev.name: {

"topic0": ev.topic0,

"signature": ev.signature,

"name_snake_case": ev.name_snake_case,

"selector_signature": ev.selector_signature,

}

for ev in evm_abi_events(abi_json)

}

# Parse functions — dict keyed by function name

functions = {

fn.name: {

"selector": fn.selector,

"signature": fn.signature,

"name_snake_case": fn.name_snake_case,

"selector_signature": fn.selector_signature,

}

for fn in evm_abi_functions(abi_json)

}

You can then use the parsed values in your query and steps:

query = Query(

kind=QueryKind.EVM,

params=evm.Query(

from_block=18_000_000,

logs=[

evm.LogRequest(

address=[my_token_address],

topic0=[events["Transfer"]["topic0"]],

),

],

),

)

steps = [

Step(

kind=StepKind.EVM_DECODE_EVENTS,

config=EvmDecodeEventsConfig(

event_signature=events["Transfer"]["signature"],

),

),

]

Query

The query defines what blockchain data to fetch: the block range, which tables to include, what filters to apply, and which fields to select. Queries are specific to a blockchain type (Kind), and can be either:

- EVM (for Ethereum and compatible chains) or

- SVM (for Solana).

Each query consists of a request to select subsets of tables/data (block, logs, instructions) and field selectors to specify what columns should be included in the response for each table.

Structure

from tiders_core.ingest import Query, QueryKind

A query has:

kind—QueryKind.EVMorQueryKind.SVMparams— chain-specific query parameters

EVM Queries

Python

from tiders_core.ingest import evm

query = Query(

kind=QueryKind.EVM,

params=evm.Query(

from_block=18_000_000, # required

to_block=18_001_000, # optional — defaults to chain head

include_all_blocks=False, # optional — include blocks with no matching data

logs=[evm.LogRequest(...)], # optional — log filters

transactions=[evm.TransactionRequest(...)], # optional — transaction filters

traces=[evm.TraceRequest(...)], # optional — trace filters

fields=evm.Fields(...), # field selection

),

)

yaml

query:

kind: evm

from_block: 18000000

to_block: 18001000 # optional — defaults to chain head

include_all_blocks: false # optional — default: false

logs: [...]

transactions: [...]

traces: [...]

fields: {...}

EVM Table filters

The logs, transactionsand traces params enable fine-grained row filtering through [table]Request objects. Each request individually filters for a subset of rows in the tables. You can combine multiple requests to build complex queries tailored to your needs. Except for blocks, table selection is made through explicit inclusion in a dedicated request or an include_[table] parameter.

Log Requests

Filter event logs by contract address and/or topic. All filter fields are combined with OR logic within a field and AND logic across fields.

Python

evm.LogRequest(

address=["0xabc..."], # optional — list of log emitter addresses

topic0=["0xabc..."], # optional — list of keccak256 hash or event signature

topic1=["0xabc..."], # optional — list of first indexed parameter

topic2=["0xabc..."], # optional — list of second indexed parameter

topic3=["0xabc..."], # optional — list of third indexed parameter

include_transactions=False, # optional — include parent transaction

include_transaction_logs=False, # optional — include all logs from matching txs

include_transaction_traces=False, # optional — include traces from matching txs

include_blocks=True, # optional — include block data

)

yaml

query:

kind: evm

logs:

- address: "0xdabc..." # optional

topic0: "Transfer(address,address,uint256)" # optional — signature or 0x hex hash

topic1: "0xabc..." # optional

topic2: "0xabc..." # optional

topic3: "0xabc..." # optional

include_transactions: false # optional, default: false

include_transaction_logs: false # optional, default: false

include_transaction_traces: false # optional, default: false

include_blocks: true # optional, default: false

Transaction Requests

Filter transactions by sender, recipient, function selector, or other fields. Filtering transaction data at the source in a request is not supported by standart ETH JSON-RPC calls of RPC providers.

All filter fields are combined with OR logic within a field and AND logic across fields.

Python

evm.TransactionRequest(

from_=["0xabc..."], # optional — list of sender addresses

to=["0xabc..."], # optional — list of recipient addresses

sighash=["0xa9059cbb"], # optional — list of 4-byte function selectors (hex)

status=[1], # optional — list of status, 1=success, 0=failure

type_=[2], # optional — list of type, 0=legacy, 1=access list, 2=EIP-1559

contract_deployment_address=["0x..."],# optional — list of deployed contract addresses

hash=["0xabc..."], # optional — list of specific transaction hashes

include_logs=False, # optional — include emitted logs

include_traces=False, # optional — include execution traces

include_blocks=False, # optional — include block data

)

yaml

query:

kind: evm

transactions:

- from: ["0xabc..."] # optional

to: ["0xabc..."] # optional

sighash: ["0xa9059cbb"] # optional

status: [1] # optional

type: [2] # optional

contract_deployment_address: ["0x..."] # optional

hash: ["0xabc..."] # optional

include_logs: false # optional, default: false

include_traces: false # optional, default: false

include_blocks: false # optional, default: false

Trace Requests

Filter execution traces (internal transactions). Filtering trace data at the source in a request is not supported by standart ETH JSON-RPC calls of RPC providers.

All filter fields are combined with OR logic within a field and AND logic across fields.

Python

evm.TraceRequest(

from_=["0xabc..."], # optional — list of caller addresses

to=["0xabc..."], # optional — list of callee addresses

address=["0xabc..."], # optional — list of ontract addresses in the trace

call_type=["call"], # optional — list of call types, "call", "delegatecall", "staticcall"

reward_type=["block"], # optional — list of reward_type, "block", "uncle"

type_=["call"], # optional — list of trace type, "call", "create", "suicide"

sighash=["0xa9059cbb"], # optional — list of 4-byte function selectors

author=["0xabc..."], # optional — list of block reward author addresses

include_transactions=False, # optional — include parent transaction

include_transaction_logs=False, # optional — include logs from matching txs

include_transaction_traces=False, # optional — include all traces from matching txs

include_blocks=False, # optional — include block data

)

yaml

query:

kind: evm

traces:

- from: ["0xabc..."] # optional

to: ["0xabc..."] # optional

address: ["0xabc..."] # optional

call_type: ["call"] # optional — call, delegatecall, staticcall

reward_type: ["block"] # optional — block, uncle

type: ["call"] # optional — call, create, suicide

sighash: ["0xa9059cbb"] # optional

author: ["0xabc..."] # optional

include_transactions: false # optional, default: false

include_transaction_logs: false # optional, default: false

include_transaction_traces: false # optional, default: false

include_blocks: false # optional, default: false

EVM Field Selection

Select only the columns you need. All fields default to false.

Python

evm.Fields(

block=evm.BlockFields(number=True, timestamp=True, hash=True),

transaction=evm.TransactionFields(hash=True, from_=True, to=True, value=True),

log=evm.LogFields(block_number=True, address=True, topic0=True, data=True),

trace=evm.TraceFields(from_=True, to=True, value=True, call_type=True),

)

yaml

query:

kind: evm

fields:

block: [number, timestamp, hash]

transaction: [hash, from, to, value]

log: [block_number, address, topic0, data]

trace: [from, to, value, call_type]

Available Block Fields

number, hash, parent_hash, nonce, sha3_uncles, logs_bloom, transactions_root, state_root, receipts_root, miner, difficulty, total_difficulty, extra_data, size, gas_limit, gas_used, timestamp, uncles, base_fee_per_gas, blob_gas_used, excess_blob_gas, parent_beacon_block_root, withdrawals_root, withdrawals, l1_block_number, send_count, send_root, mix_hash

Available Transaction Fields

block_hash, block_number, from, gas, gas_price, hash, input, nonce, to, transaction_index, value, v, r, s, max_priority_fee_per_gas, max_fee_per_gas, chain_id, cumulative_gas_used, effective_gas_price, gas_used, contract_address, logs_bloom, type, root, status, sighash, y_parity, access_list, l1_fee, l1_gas_price, l1_fee_scalar, gas_used_for_l1, max_fee_per_blob_gas, blob_versioned_hashes, deposit_nonce, blob_gas_price, deposit_receipt_version, blob_gas_used, l1_base_fee_scalar, l1_blob_base_fee, l1_blob_base_fee_scalar, l1_block_number, mint, source_hash

Available Log Fields

removed, log_index, transaction_index, transaction_hash, block_hash, block_number, address, data, topic0, topic1, topic2, topic3

Available Trace Fields

from, to, call_type, gas, input, init, value, author, reward_type, block_hash, block_number, address, code, gas_used, output, subtraces, trace_address, transaction_hash, transaction_position, type, error, sighash, action_address, balance, refund_address

SVM Queries

Python

from tiders_core.ingest import svm

query = Query(

kind=QueryKind.SVM,

params=svm.Query(

from_block=330_000_000,

to_block=330_001_000, # optional — defaults to chain head

include_all_blocks=False, # optional — include blocks with no matching data

instructions=[svm.InstructionRequest(...)], # optional

transactions=[svm.TransactionRequest(...)], # optional

logs=[svm.LogRequest(...)], # optional

balances=[svm.BalanceRequest(...)], # optional

token_balances=[svm.TokenBalanceRequest(...)], # optional

rewards=[svm.RewardRequest(...)], # optional

fields=svm.Fields(...),

),

)

yaml

query:

kind: svm

from_block: 330000000

to_block: 330001000 # optional — defaults to chain head

include_all_blocks: false # optional — default: false

instructions: [...]

transactions: [...]

logs: [...]

balances: [...]

token_balances: [...]

rewards: [...]

fields: {...}

SVM Table filters

The instructions, transactions, logs, balances , token_balances, rewards and fields params enable fine-grained row filtering through [table]Request objects. Each request individually filters for a subset of rows in the tables. You can combine multiple requests to build complex queries tailored to your needs. Except for blocks, table selection is made through explicit inclusion in a dedicated request or an include_[table] parameter.

Instruction Requests

Filter Solana instructions by program, discriminator, or account. Discriminator and account filters (d1–d8, a0–a9) use OR logic within a field and AND logic across fields.

Python

svm.InstructionRequest(

program_id=["JUP6LkbZbjS1jKKwapdHNy74zcZ3tLUZoi5QNyVTaV4"], # optional — list of program ids, base58

discriminator=["0xe445a52e51cb9a1d40c6cde8260871e2"], # optional — list of discriminators, bytes or hex

d1=["0xe4"], # optional — list of 1-byte data prefix filter

d2=["0xe445"], # optional — list of 2-byte data prefix filter

d4=["0xe445a52e"], # optional — list of 4-byte data prefix filter

d8=["0xe445a52e51cb9a1d"], # optional — list of 8-byte data prefix filter

a0=["0xabc..."], # optional — list of account at index 0 (base58)

a1=["0xabc..."], # optional — list of account at index 1

# a2–a9 follow the same pattern

is_committed=False, # optional — only committed instructions

include_transactions=True, # optional — include parent transaction

include_transaction_token_balances=False, # optional — include token balance changes

include_logs=False, # optional — include program logs

include_inner_instructions=False, # optional — include inner (CPI) instructions

include_blocks=True, # optional — default: true

)

yaml

query:

kind: svm

instructions:

- program_id: ["JUP6LkbZbjS1jKKwapdHNy74zcZ3tLUZoi5QNyVTaV4"] # optional

discriminator: ["0xe445a52e51cb9a1d40c6cde8260871e2"] # optional

d1: ["0xe4"] # optional

d2: ["0xe445"] # optional

d4: ["0xe445a52e"] # optional

d8: ["0xe445a52e51cb9a1d"] # optional

a0: ["TokenkegQfeZyiNwAJbNbGKPFXCWuBvf9Ss623VQ5DA"] # optional

is_committed: false # optional, default: false

include_transactions: true # optional, default: false

include_transaction_token_balances: false # optional, default: false

include_logs: false # optional, default: false

include_inner_instructions: false # optional, default: false

include_blocks: true # optional, default: true

Transaction Requests (SVM)

Filter Solana transactions by fee payer.

Python

svm.TransactionRequest(

fee_payer=["0xabc..."], # optional — list of fee payer public keys (base58)

include_instructions=False, # optional — include all instructions

include_logs=False, # optional — include program logs

include_blocks=False, # optional — include block data

)

yaml

query:

kind: svm

transactions:

- fee_payer: ["0xabc..."] # optional

include_instructions: false # optional, default: false

include_logs: false # optional, default: false

include_blocks: false # optional, default: false

Log Requests (SVM)

Filter Solana program log messages by program ID and/or log kind.

Python

svm.LogRequest(

program_id=["JUP6LkbZbjS1jKKwapdHNy74zcZ3tLUZoi5QNyVTaV4"], # optional — list of program ids

kind=[svm.LogKind.LOG], # optional — list of kinds, log, data, other

include_transactions=False, # optional — include parent transaction

include_instructions=False, # optional — include the emitting instruction

include_blocks=False, # optional — include block data

)

yaml

query:

kind: svm

logs:

- program_id: ["JUP6LkbZbjS1jKKwapdHNy74zcZ3tLUZoi5QNyVTaV4"] # optional

kind: [log] # optional — log, data, other

include_transactions: false # optional, default: false

include_instructions: false # optional, default: false

include_blocks: false # optional, default: false

Balance Requests

Filter native SOL balance changes by account.

Python

svm.BalanceRequest(

account=["0xabc..."], # optional — list of account public keys (base58)

include_transactions=False, # optional — include parent transaction

include_transaction_instructions=False, # optional — include transaction instructions

include_blocks=False, # optional — include block data

)

yaml

query:

kind: svm

balances:

- account: ["0xabc..."] # optional — list of accounts

include_transactions: false # optional, default: false

include_transaction_instructions: false # optional, default: false

include_blocks: false # optional, default: false

Token Balance Requests

Filter SPL token balance changes. Pre/post filters match the state before and after the transaction.

Python

svm.TokenBalanceRequest(

account=["0xabc..."], # optional — list of token account public keys (base58)

pre_program_id=["TokenkegQ..."], # optional — list of token program ID before tx

post_program_id=["TokenkegQ..."], # optional — list of token program ID after tx

pre_mint=["0xabc..."], # optional — list of token mint address before tx

post_mint=["0xabc..."], # optional — list of token mint address after tx

pre_owner=["0xabc..."], # optional — list of token account owner before tx

post_owner=["0xabc..."], # optional — list of token account owner after tx

include_transactions=False, # optional — include parent transaction

include_transaction_instructions=False, # optional — include transaction instructions

include_blocks=False, # optional — include block data

)

yaml

query:

kind: svm

token_balances:

- account: ["0xabc..."] # optional

pre_mint: ["0xabc..."] # optional

post_mint: ["0xabc..."] # optional

pre_owner: ["0xabc..."] # optional

post_owner: ["0xabc..."] # optional

pre_program_id: ["TokenkegQ..."] # optional

post_program_id: ["TokenkegQ..."] # optional

include_transactions: false # optional, default: false

include_transaction_instructions: false # optional, default: false

include_blocks: false # optional, default: false

Reward Requests

Filter Solana validator reward records by public key.

Python

svm.RewardRequest(

pubkey=["0xabc..."], # optional — list of validator public keys (base58)

include_blocks=False, # optional — include block data

)

yaml

query:

kind: svm

rewards:

- pubkey: ["0xabc..."] # optional

include_blocks: false # optional, default: false

SVM Field Selection

Select only the columns you need. All fields default to false.

Python

svm.Fields(

instruction=svm.InstructionFields(block_slot=True, program_id=True, data=True),

transaction=svm.TransactionFields(signature=True, fee=True),

log=svm.LogFields(program_id=True, message=True),

balance=svm.BalanceFields(account=True, pre=True, post=True),

token_balance=svm.TokenBalanceFields(account=True, post_mint=True, post_amount=True),

reward=svm.RewardFields(pubkey=True, lamports=True, reward_type=True),

block=svm.BlockFields(slot=True, hash=True, timestamp=True),

)

yaml

query:

kind: svm

fields:

instruction: [block_slot, program_id, data]

transaction: [signature, fee]

log: [program_id, message]

balance: [account, pre, post]

token_balance: [account, post_mint, post_amount]

reward: [pubkey, lamports, reward_type]

block: [slot, hash, timestamp]

Available Instruction Fields

block_slot, block_hash, transaction_index, instruction_address, program_id, a0–a9, rest_of_accounts, data, d1, d2, d4, d8, error, compute_units_consumed, is_committed, has_dropped_log_messages

Available Transaction Fields (SVM)

block_slot, block_hash, transaction_index, signature, version, account_keys, address_table_lookups, num_readonly_signed_accounts, num_readonly_unsigned_accounts, num_required_signatures, recent_blockhash, signatures, err, fee, compute_units_consumed, loaded_readonly_addresses, loaded_writable_addresses, fee_payer, has_dropped_log_messages

Available Log Fields (SVM)

block_slot, block_hash, transaction_index, log_index, instruction_address, program_id, kind, message

Available Balance Fields

block_slot, block_hash, transaction_index, account, pre, post

Available Token Balance Fields